Franklin's blog

You can find my CV here.

About me in English

Hi, I'm Franklin! I'm a software developer and 1st year Master's student in Tübingen studying computational linguistics. I love programming, recreational math and language-learning.

Sobre mí en español

¡Hola, soy Franklin! Soy un desarrollador de software y un estudiante de 1er año en un programa de mastería en Tübingen. Me encantan la programación, la matemática recreativa y el aprendizaje de idiomas.

Über mich auf Deutsch

Hallo, ich bin Franklin! Ich bin ein Softwareentwickler und Masterstudent in Computerlinguistik in Tübingen. Ich liebe das Programmieren, die Unterhaltungsmathematik und das Sprachenlernen.

Обо мне на русском

Здравствуйте, меня зовут Франклин! Я программист и студент магистерной программы в Тюбингене в области компьютерной лингвистики. Я очень люблю программирование, занимательную математику и изучение языка.

Here's where else you can find me:

Here are some of my recent web projects:

Recent posts

View all posts

Use my RSS feed

...and here are some things that aren't posts

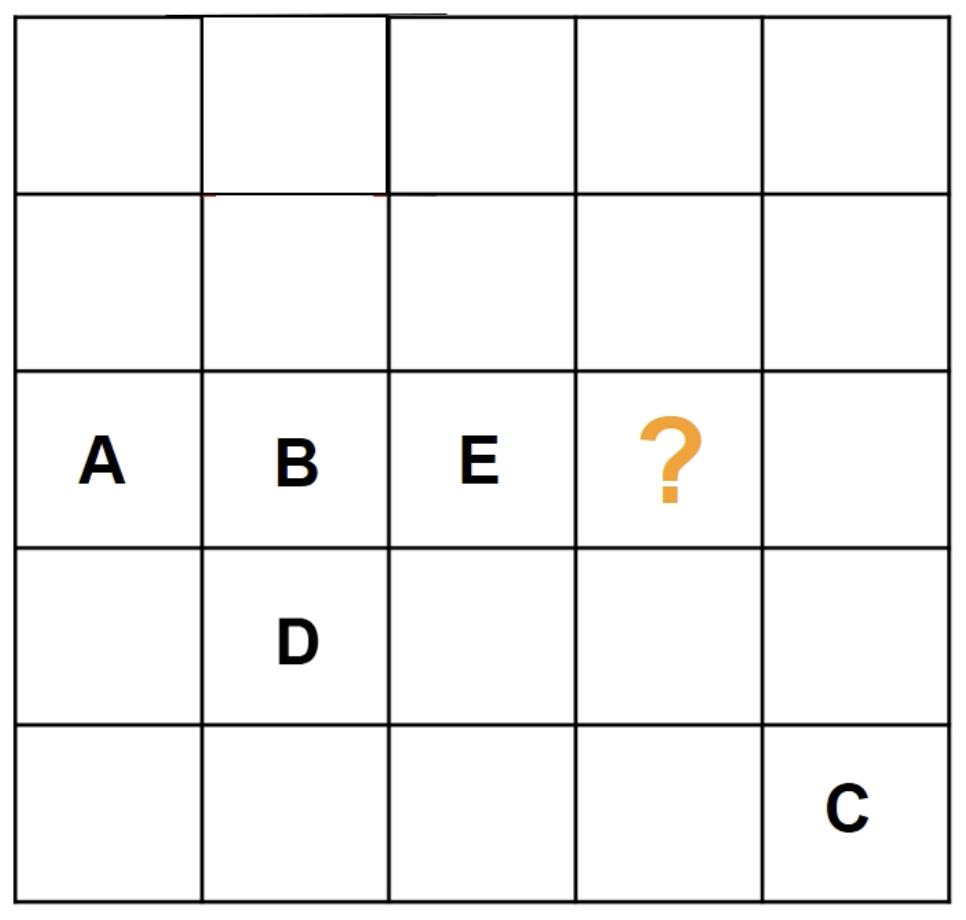

Angeblich schwere 5x5 Latin-Square-Rätsel

Angeblich schwere 5x5 Latin-Square-Rätsel